The advent of transformer-based natural language processing (NLP) models, large language models (LLMs) and generative models has made advanced machine learning (ML) systems accessible to everyone with internet access through tools like ChatGPT. Unfortunately, malicious actors can also use these models to create phishing emails.

In this post, we’ll explain how Proofpoint defends against artificial intelligence (AI)-generated phishing threats and deploys advanced ML models throughout our detection systems.

Defending against AI-generated phishing threats

Anyone with access to the internet can now take advantage of recent developments in AI, ML and NLP. ChatGPT is one of those advances.

While threat actors are using systems such as ChatGPT to draft phishing emails, these models can only produce text. Some recipients may be more likely to click on links in AI-generated phishing emails than similar links in human-written messages. Perhaps the style of the text may explain this increase in willingness to click. These models can produce professional business English with standard English grammar, which may lend credibility to the text as compared to stereotypical phishing emails with broken grammar and awkward phrasing.

Some people are concerned about generative models creating highly targeted phishing emails, but the threat is often overstated. Even though these models can wax poetic on just about any topic, these models don’t currently understand how a particular vendor or your boss writes, nor do they understand what would motivate a given recipient.

The text generated by LLMs tends to be generic and lacks the personalised feel of known correspondents. Additionally, these models aren’t trained on current events, which limits their ability to create timely phish lures. As a result, these publicly available models aren’t yet advanced enough to compellingly impersonate your professional network.

Also, while AI-generated phishing emails may have improved grammar over messages written by non-native English speakers, they’re unlikely to enhance spear-phishing attacks significantly.

Figure 1. A generated spear phish that does not read like our CEO.

The text these models generate is only one aspect of an email message. Comprehensive email security solutions consider multiple factors beyond the body text, such as risky URLs, malicious attachments and unusual communication patterns. It’s important to note that systems like ChatGPT can only create email text and perhaps HTML formatting—but they can’t send full emails from impersonated infrastructure.

The use of DMARC for sender authentication remains an effective tool for thwarting impersonation attempts. To gain a deeper understanding of how different elements of an email can be used to improve detection, watch our webinar on AI and ML in our detection pipeline. Proofpoint remains a formidable defence against AI-generated phishing threats.

Using transformer models at Proofpoint

The “T” in ChatGPT (and BERT, and others) stands for “transformer”. These ML models are tailored for and specialised in processing language and text. At Proofpoint, we use these kinds of models alongside many others. So, it’s helpful to provide some examples of where we’ve found such models useful.

While free-to-use services like ChatGPT have made transformer-based models publicly available, Proofpoint has been using transformer models in our products for years. Without delving too deeply into the technicalities, it’s important to note that transformer models can handle input sequences of varying lengths and accurately represent intricate relationships between words in a sequence.

With this context, let’s explore a few ways that Proofpoint incorporates transformers in our products.

To enable more robust people risk assessments, we created a Title Classifier that connects with Active Directory to understand employee job function and seniority. Because directory titles tend to be short, the attention of transformers may not seem necessary. However, the transformers allow Title Classifier to leverage the position and context of title components. For example, “manager” takes on different functions in the title “product manager” than in “manager, marketing”.

Transformers can process text of varying lengths from job titles to entire emails. In fact, they’re particularly well-suited for processing emails because they can effectively handle sequences of variable length and capture complex relationships between words in the sequence. That makes them ideal for processing the complex and diverse structures that exist in freeform email text.

Proofpoint uses transformer models to create sophisticated email security products that effectively identify and protect against diverse threat types, including phishing, malware and spam. One such model is integrated into our Closed-Loop Email Analysis and Response (CLEAR) product. CLEAR allows users to report phishing emails with a single click and automates much of the response from the security operations centre (SOC). Not all emails that users report are phishing, so Proofpoint uses a model derived from BERT to consider message text and other indicators to identify likely harmless emails and reduce the burden on the SOC.

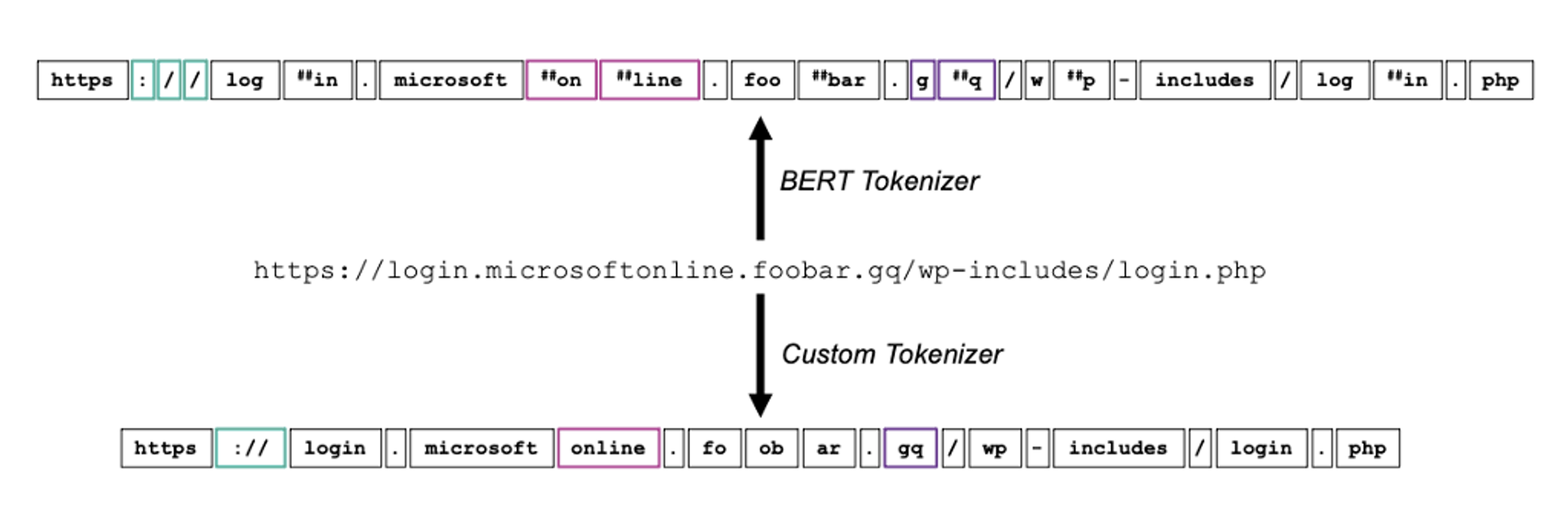

Proofpoint is using transformers to improve NLP tasks, and we’ve also created novel models to better process the language of malware. One such example is a tool for campaign discovery codenamed CampDisco. While LLMs like BERT and GPT have revolutionised NLP, they rely on rigid tokeniser models. By using a custom tokeniser for malware forensics, we created a smaller and more performant neural network that accurately clusters malware campaigns.

CampDisco also demonstrates a tenant of ML at Proofpoint: Humans and machine learning are better together. CampDisco is a tool the Proofpoint threat research team uses to better understand the threat landscape and accelerate our time to detection.

Figure 2. An example of the CampDisco custom tokeniser in action.

How to create robust ML at scale

Proofpoint uses ML in all aspects of our products. As a data-first company, it’s inherent in our approach to finding innovative solutions to protect our customers from the constantly evolving threat landscape. Transformer models are only one aspect of our ML arsenal, but the principles that drive their success are similar across all our systems.

To achieve industry-leading results, it’s essential to have powerful models that can process vast amounts of data efficiently and effectively. Also, having relevant data is crucial for training models to accurately identify threats and differentiate between benign and malicious activities. This data must also be continuously updated to keep pace with the ever-changing threat landscape.

Robust operational processes are also critical for successfully deploying and maintaining these models at scale. That includes monitoring model performance, validating the quality of the data being used and continuously refining the models to ensure their accuracy and effectiveness. Our ML experts have years of experience and a deep understanding of these critical success factors, which enables Proofpoint to deliver innovative and effective solutions to our customers.

Models

ML models are the foundation for any data science programme, and they’re widely available in free and open-source implementations. However, what sets apart an expert data science team from a novice one is their ability to understand when and how to use these available models.

An expert team has a deep understanding of different model architectures and their strengths and weaknesses. They know when to use a particular model for a specific problem and how to get the most value from the data by using the right model. They’re also skilled in tailoring models to fit the unique requirements of a particular use case, which can greatly improve the performance of the model.

Beyond our internal research programmes, our machine learning teams partner with universities to advance the state-of-the-art in machine learning. These university programmes develop model architectures on public datasets and are incorporated into Proofpoint systems by our data scientists.

At Proofpoint, our team of ML experts has a wealth of experience in various fields. Their combined expertise and experience help us provide high-quality, customised solutions for our clients. We use our team’s ML expertise to extract the most value from data and deliver results that meet the needs of each project.

Data

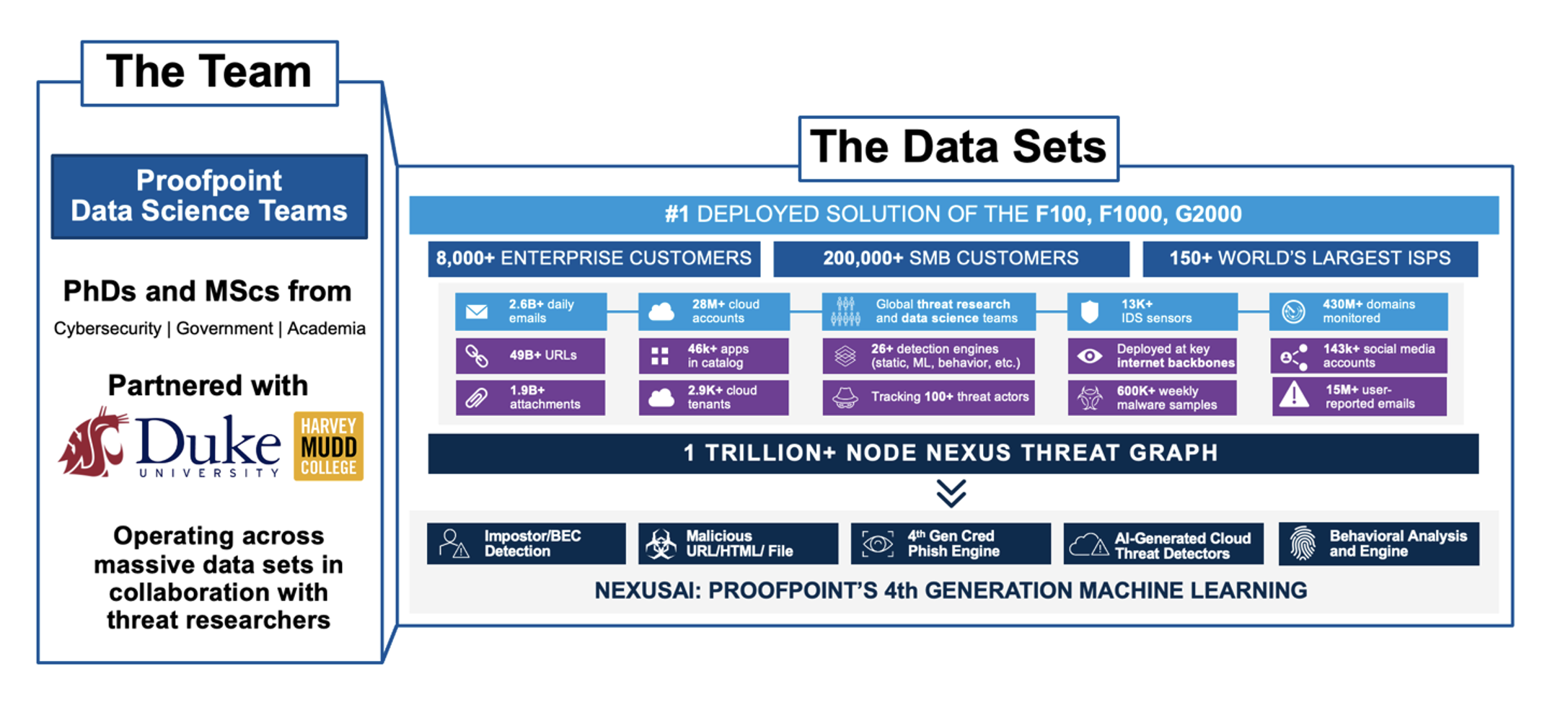

High-quality data is crucial to developing and deploying effective ML models. The quality of the training data greatly impacts the accuracy and overall performance of the resultant model. With our extensive network of customers and position upstream of other products, Proofpoint data sets are unrivalled in the security space. As a leading provider of security solutions, Proofpoint protects many of the world’s largest companies and processes billions of emails and tens of billions of URLs daily — giving us immediate insights into the latest threats and trends in the cybersecurity landscape.

We use these data to train our ML models and make sure they’re always up to date with the latest information about emerging threats. By doing so, our models identify and mitigate threats more effectively, leading to better overall protection for all our customers. This high-level view, combined with our team of ML experts and threat researchers, sets Proofpoint apart in the industry and provides our customers with a truly comprehensive security solution.

Figure 3. A sample of the data sets used by Proofpoint machine learning.

Process

Just having data isn’t enough, though. Even with outstanding data, the data must be mobilised to create leading ML models. The process around Proofpoint machine learning is another key success factor for ML at scale. It boils down to having good solutions for the following problems, or questions:

- How quickly can we train and deploy new models?

- How frequently are new models placed in production?

- How do our processes support ML detection of novel attacks?

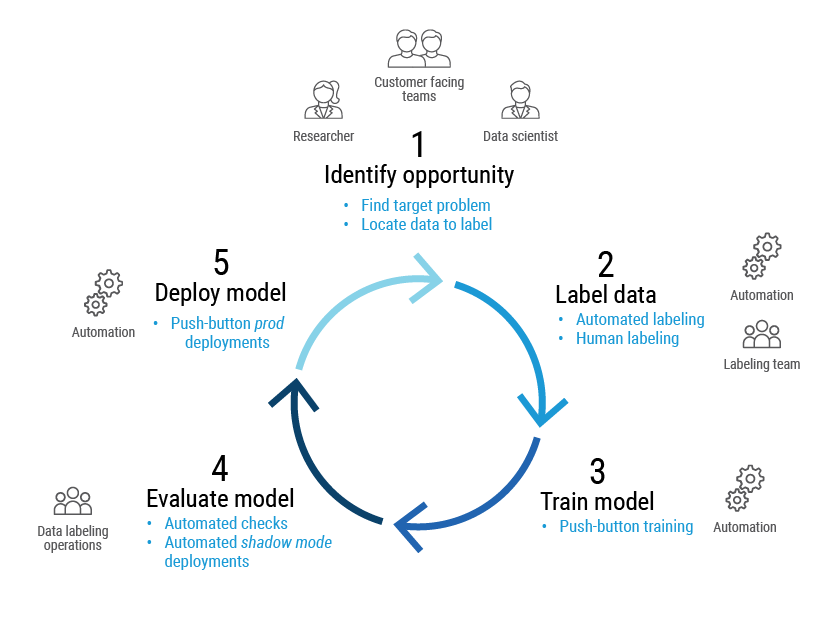

To address these questions (and their many variants), Proofpoint maintains a cycle of continuous improvement. Our data scientists, threat researchers and customer-facing teams identify opportunities for new models or improvements to existing systems. We then make sure that we have “ground truth” for our training data with a combination of expert human labellers and automated labelling from the Proofpoint threat protection platform.

After that, we can train the model with automated pipelines and validate it. Success criteria depend on the model use case. For example, if the model is identifying potentially risky URLs to predictively scan, we may prioritise recalling all threats at the cost of needless scans. But if the model were to block an email, higher prediction confidence may be required.

Once we validate the new model, we can deploy the model with the push of a button. The orchestration of this process is called machine learning operations or “MLOps”.

Figure 4. Overview of an MLOps life cycle at Proofpoint.

Our streamlined MLOps platform optimises our path to production and maximises the impact of our people. We have systematised this process with a set of common patterns accelerating the launch of new models. With minimal overhead, we can realise the value of innovations in the service of our customers as quickly as possible. This process is setting the bar for ML at scale, and Proofpoint was asked to share our MLOps best practices at AWS re:Invent.

The operational process to mobilise data and continuously improve models is what sets apart world-class ML teams. At Proofpoint, not only do we combine human expertise and machine learning for threat detection, but we also create better information protection systems by incorporating humans in the loop in our data classification products.

Join the team

If you’re interested in building leading-edge ML models while fighting cyber crime, join the Proofpoint team. At Proofpoint, ML engineers and researchers work together with universities such as Harvey Mudd College, Duke University and Washington State University to develop innovative solutions to complex problems. With incredible data, best-in-breed processes and tooling, and expert teams, Proofpoint is an ideal place to practice machine learning.

If you’re interested in learning more about career opportunities at Proofpoint, visit this page.

About the authors

Luis Blando, SVP & GM, Security Products Group, Proofpoint

Luis is SVP & GM, Security Products Group, where he leads the teams that focus on our core protection products for enterprises, service providers, and mobile; and Digital Risk product development; and threat intelligence and operations teams. He has over 20 years of leadership and development experience and has led large organisations focused on building security products. Prior to Proofpoint, Luis was VP of Security Operations Engineering for McAfee, VP of Detect Engineering for Intel Security Group, and President and GM of Intel Software Argentina. He has been granted five patents and is the author or co-author of several publications on various software topics, including object-oriented programming languages and recommendation engines.

Adam Starr, Head of AI/ML, Security Products Group, Proofpoint

Adam Starr leads the machine learning and artificial intelligence teams for the Security Products Group at Proofpoint. Adam’s team develops many of the detection systems behind Proofpoint’s core email security offerings: Email Protection, TAP, and Threat Response. Adam brings a unique blend of technical expertise, strategic leadership, and adventurous spirit to the cybersecurity field. His understanding of complex mathematical and computing principles, combined with his hands-on experience in threat detection and prevention, offers unique insight into the cyber-landscape. Adam received his Bachelor of Arts in Mathematics from Pomona College and is an MBA candidate at Stanford University.