AI is now embedded in how work gets done. Copilots assist employees in real time. Autonomous agents retrieve data, generate content, write code, and execute workflows across enterprise systems. AI capabilities are integrated directly into collaboration platforms, developer tools, and core business applications.

This is the agentic workspace: where people and AI agents operate together, accessing the same data and operating across the same systems. For CIOs and CISOs, it introduces a new security and governance imperative.

The AI-Driven Governance Gap

According to Acuvity’s 2025 State of AI Security report (now part of Proofpoint), approximately 70% of organizations reported gaps in AI governance, and about 50% reported expectations of AI-related data loss within 12 months. As AI agents become embedded in enterprise workflows, this gap is no longer theoretical; it is operational.

The challenge is that most security architectures were not designed for this model. They were built to answer pragmatic questions: Does this user have access? Is this device trusted? Is this traffic allowed?

Autonomous AI fundamentally changes the risk equation. A single AI request can trigger dozens of downstream actions across email, cloud storage, CRM systems, and code repositories, often at machine speed and with limited human oversight. An agent may operate within authorized permissions and still take actions that are misaligned with the original purpose of the request.

This is semantic privilege escalation: technically permitted actions that are contextually inappropriate. Existing tools can validate credentials and monitor traffic, but they may not consistently determine whether AI behavior aligns with intent.

From Access Control to Intent Validation

Humans are expected to operate with integrity when using enterprise systems. They are accountable for aligning their actions with business purpose. AI agents must be governed to the same standard.

AI systems, however, do not inherently understand intent. They execute within permissions and optimize toward objectives. They do not distinguish between what is allowed and what is appropriate.

Intent-based security addresses this challenge by continuously evaluating whether AI behavior — whether initiated autonomously or by a user — aligns with the original request and enterprise policy. This marks a fundamental shift:

- From validating access to validating alignment.

- From static policy enforcement to runtime inspection.

- From reactive investigation to continuous verification.

Introducing the Agent Integrity Framework

To operationalize this shift, Proofpoint is introducing the Agent Integrity Framework.

Agent Integrity is the assurance that an AI agent operates within the boundaries of its intended purpose, authorized permissions, and expected behavior across every interaction, tool call, and data access.

The framework defines five pillars: Intent Alignment, Identity and Attribution, Behavioral Consistency, Auditability, and Operational Transparency. It also includes a five-phase maturity model guiding organizations from discovery through runtime enforcement.

Together, this gives CIOs and CISOs a blueprint for governing the new control plane of the agentic workspace:

- Agent trust is the objective.

- The integrity framework provides a governing model.

- Intent-based security acts as the enforcement engine.

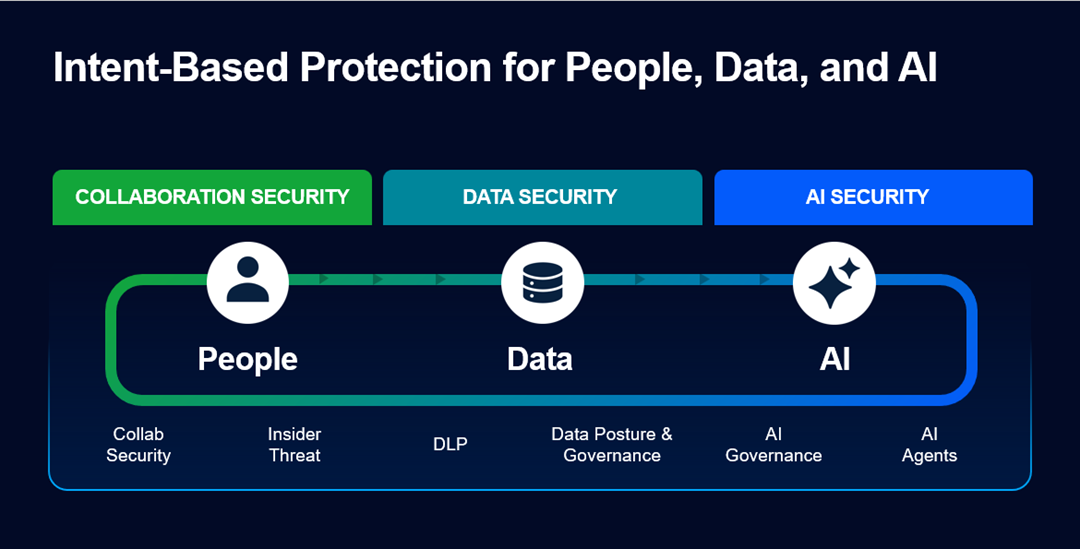

A Unified Platform for People, Data, and AI

To bring these capabilities to life, today we introduced Proofpoint AI Security, an industry-leading unified, intent-based AI security solution designed to secure human and AI agent interactions with systems, data, and AI tools across the enterprise.

It builds on Proofpoint’s human-centric foundation. Our platform began with a simple premise: security should start with people. We evolved beyond static rules to intent- and behavior-based detection models at the human layer, analyzing communications and how users interact with sensitive data. That experience informs our ability to provide best-in-class collaboration security, insider risk management, and data loss prevention.

As AI became embedded in enterprise workflows, we extended this model to data. Earlier this year, we introduced AI Data Governance to secure sensitive information in AI-driven environments. Using agentic technologies, it addresses discovery, classification, and access governance at scale — dynamically identifying sensitive data and ensuring it is accessed only by the right people and the right AI agents. Unlike brittle, policy-heavy approaches, it adapts as data moves and evolves.

The final step was governing AI behavior itself. AI systems do more than access information; they reason and act across connected systems. With the launch of Proofpoint AI Security, we’ve added AI-native visibility and intent-based runtime protection across endpoints, browsers, and MCP connections, completing protection across people, data, and AI.

Integrity Is the New Control Plane

As AI adoption accelerates, organizations shouldn’t have to choose between moving fast and staying secure.

The real question is no longer whether an agent has access. It’s whether you can continuously verify that its behavior aligns with intent, at machine speed and across every system it touches.

That’s why intent-based security and AI agent integrity become critical. Intent-based security provides the continuous verification, and AI agent integrity defines the standard of behavior. Together, they form a foundational enterprise capability for the age of autonomous AI.