Data Security for AI

Protect Sensitive Data with AI Data Security Controls

Monitor and control sensitive data in GenAI prompts, uploads, and responses across approved and shadow AI tools.

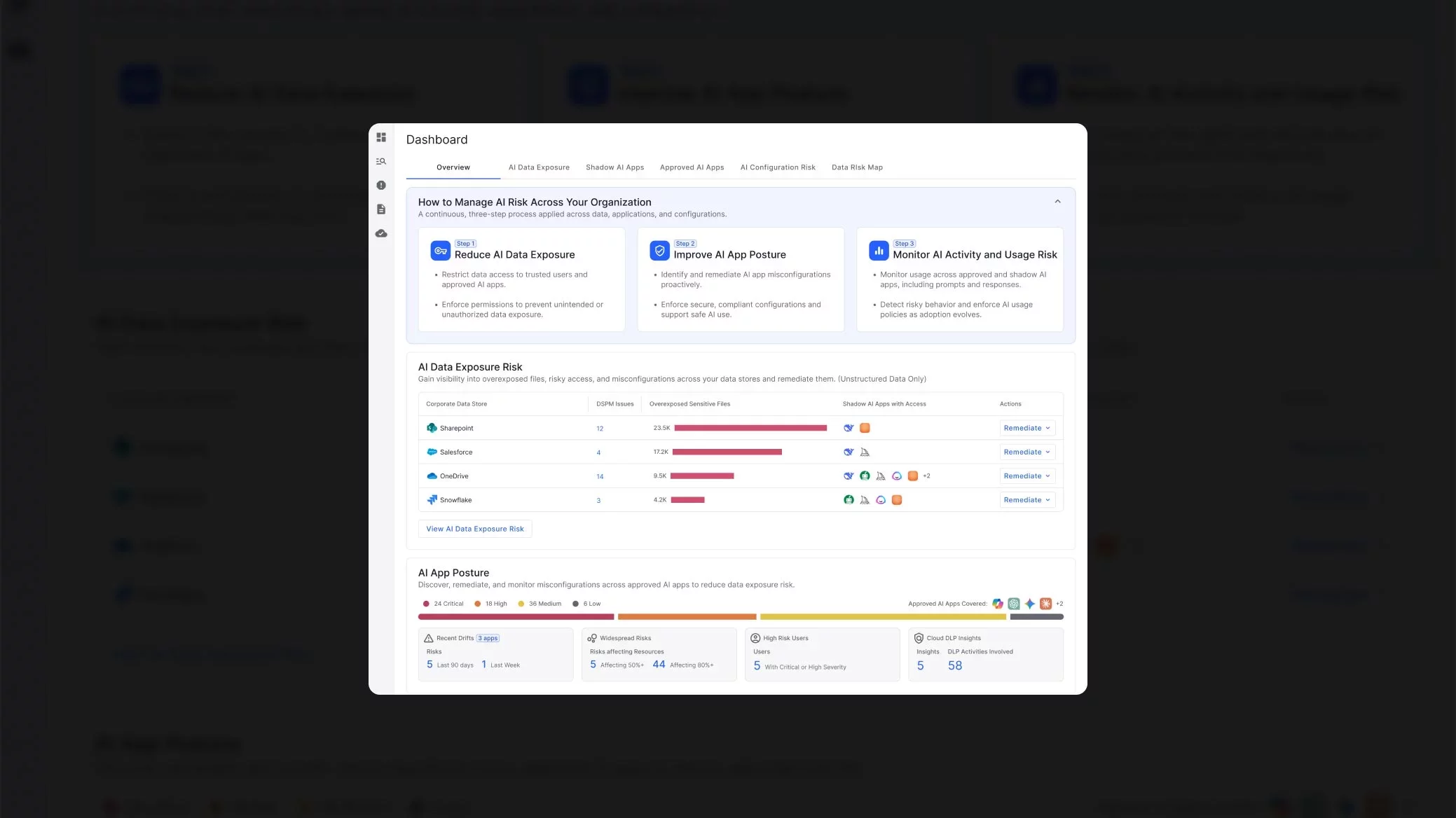

Reduce AI data exposure and control how data is used

Data security for AI demands a new approach. GenAI is expanding data exposure and insider risk across both approved and shadow AI tools. A unified AI data security solution gives security teams the visibility and control to reduce risk and protect sensitive data without slowing innovation.

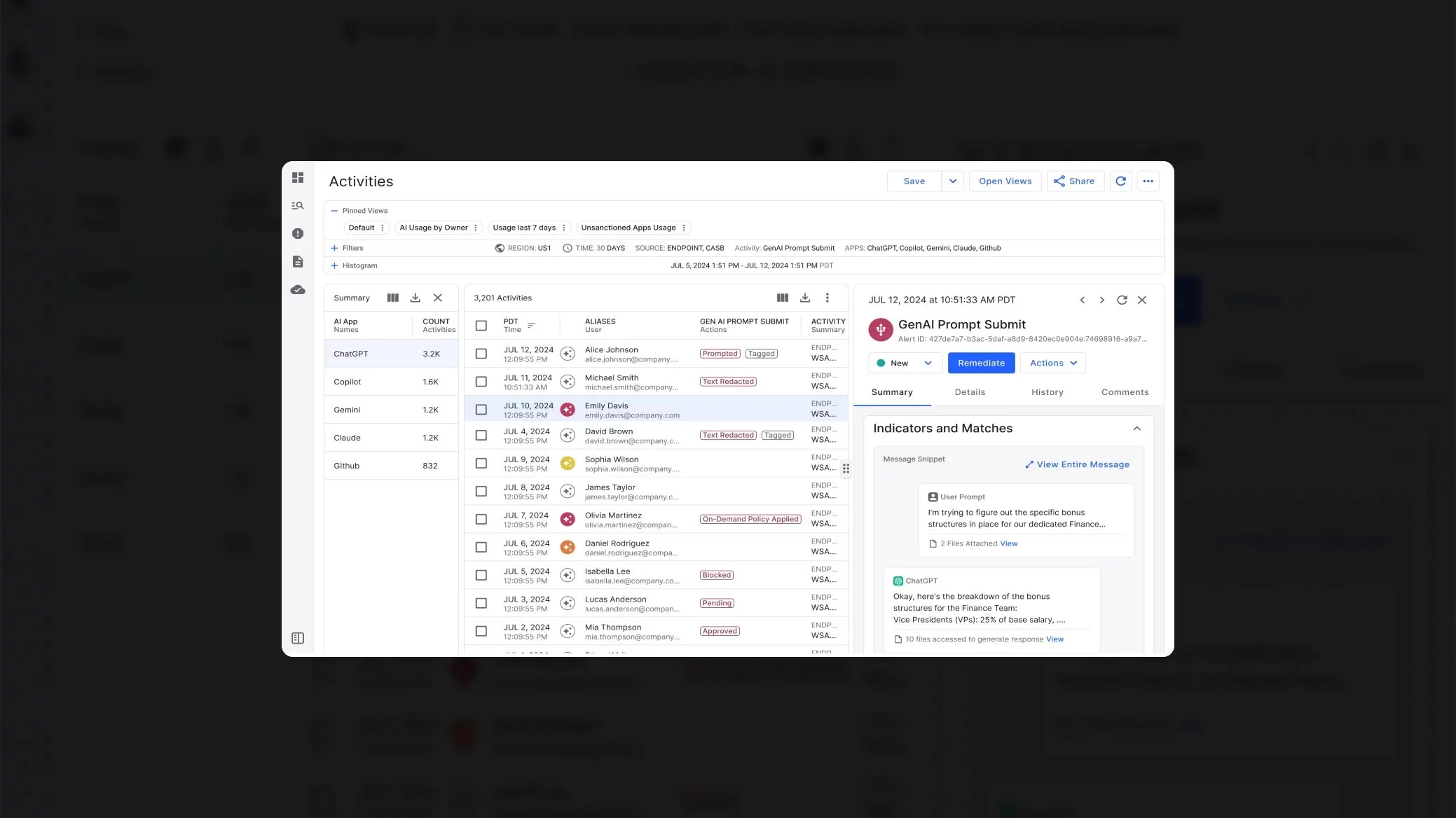

Gain visibility into how sensitive data is used and misused across shadow and approved AI apps, including high-risk users and risky behaviors.

Inspect prompts, uploads, and responses in real time to rapidly detect misuse and prevent unintended data exposure.

Block or redact sensitive data in prompts, uploads, and paste actions to prevent data loss without disrupting productivity.

AI expands your data risk and security blind spots

Adoption of generative AI creates a new attack surface and a new class of security challenges. Uncontrolled use of both shadow and approved AI tools can expose sensitive data, including intellectual property, customer data, and credentials, through prompts or responses.

Traditional data governance and security measures lack visibility into data accessed and exposed by AI systems. As a result, organizations face growing compliance and governance gaps, and struggle to audit how third-party AI systems process, share, and store regulated data.

Govern shadow AI data use and accelerate secure AI adoption

Proofpoint Data Security for AI empowers you to securely adopt AI by providing visibility into approved and shadow AI data use. It unifies monitoring, data controls, and AI activity insights so you can reduce exposure and enable safe, scalable AI adoption.

Proofpoint Data Security for AI vs. traditional data security solutions

| Proofpoint Data Security for AI | Traditional Data Security | |

|---|---|---|

| Unified view of approved and shadow AI data risks |

Yes

|

No

|

| Consistent AI-specific data controls powered by a unified data security platform |

Yes

|

No

|

| Monitoring of AI prompts, uploads and responses for sensitive data and activity volume |

Yes

|

No

|

| Full capture of AI prompts and responses for audit and investigation |

Yes

|

No

|

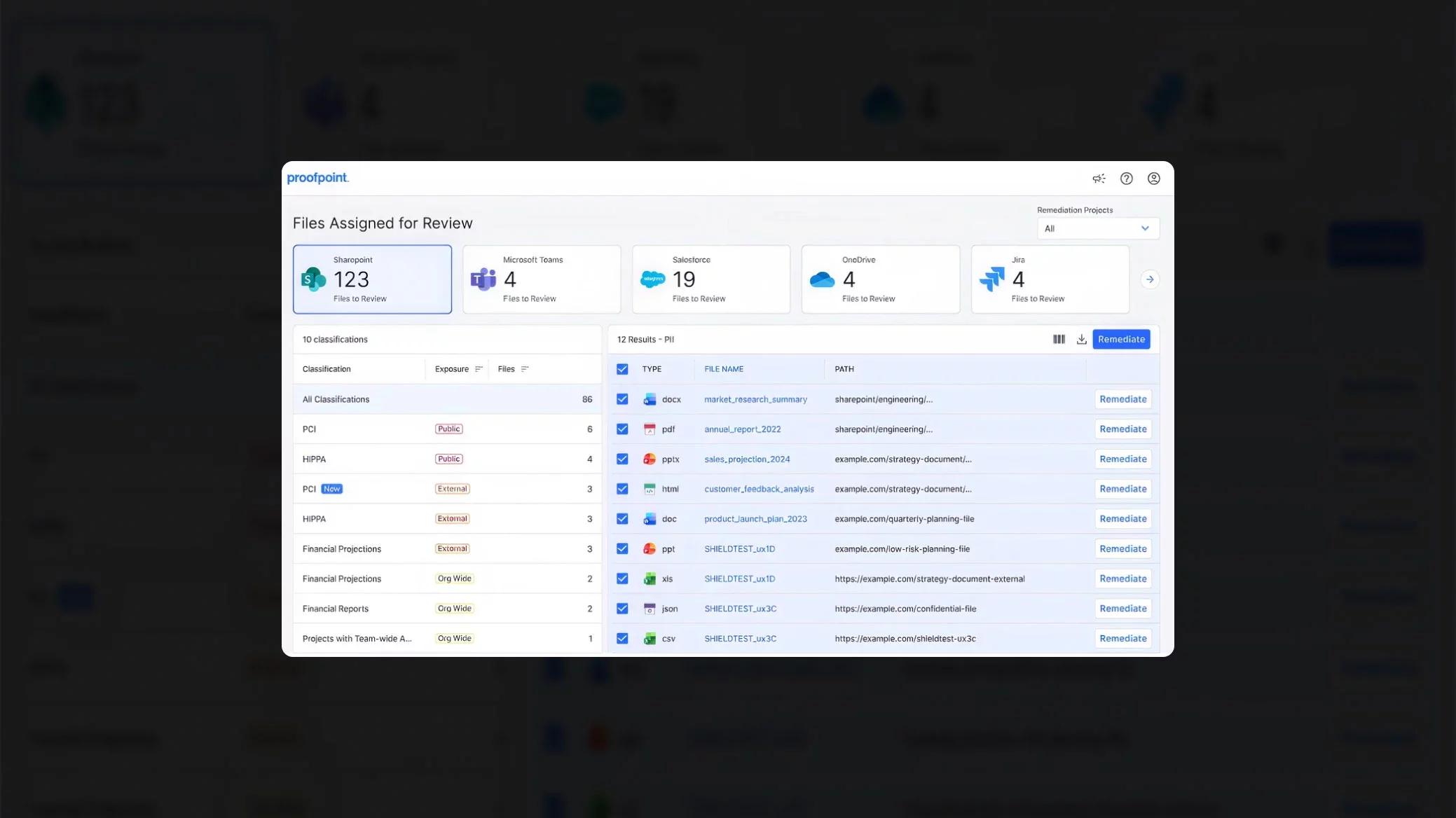

| File owner workflows for data access remediation |

Yes

|

No

|

FAQ

-

What risks does generative AI introduce when organizations lack proper data security?

Generative AI increases data exposure when governance is weak. AI systems can access, combine, and surface sensitive data at scale, including data that users would not normally find on their own. As a result, the risk of accidental disclosure and data breaches grows. Without visibility into AI activity, these risks grow quickly and create ongoing exposure.Generative AI increases data exposure when governance is weak. AI systems can access, combine, and surface sensitive data at scale, including data that users would not normally find on their own. As a result, the risk of accidental disclosure and data breaches grows. Without visibility into AI activity, these risks grow quickly and create ongoing exposure.

Common risk areas include:

- Shadow AI tools that store prompts or uploaded data outside your control

- Users entering sensitive data into public or consumer AI platforms

- High-volume AI activity, such as bulk retrieval, summarizing, or cross-dataset analysis

-

How is Data Security for AI different from traditional data security tools?

Data Security for AI focuses on how users interact with AI systems and how AI systems access, process, and expose data in real time. Traditional security measures, on the other hand, rely on static rules and do not provide visibility into AI-driven data use.Data Security for AI focuses on how users interact with AI systems and how AI systems access, process, and expose data in real time. Traditional security measures, on the other hand, rely on static rules and do not provide visibility into AI-driven data use.

Key differences in Data Security for AI include:

- Real-time monitoring of prompts, uploads, and responses involving sensitive data

- Unified visibility across both approved and shadow AI tools

- Consistent data protection controls across AI interactions

This approach helps organizations protect data as AI use grows and changes.

-

How does data security for AI help organizations reduce shadow AI and unauthorized AI tool use?

Data security for AI reduces shadow AI risks by identifying unapproved tools accessing sensitive data, monitoring AI interactions, and providing clear actions to block or correct unsafe usage. It shows security teams how employees interact with AI systems—including prompts, uploads, and paste activities—whether those systems are approved or not.Data security for AI reduces shadow AI risks by identifying unapproved tools accessing sensitive data, monitoring AI interactions, and providing clear actions to block or correct unsafe usage. It shows security teams how employees interact with AI systems—including prompts, uploads, and paste activities—whether those systems are approved or not.

Here’s how data security for AI reduces shadow AI:

- Discovers unapproved AI data use through web and SaaS activity analysis.

- Monitors and controls prompts and file uploads to detect when sensitive data is exposed to GenAI.

- Enables targeted remediation, including revoking access or enforcing policy controls.

- Identifies high‑risk users who rely on unsupported AI tools for daily tasks.

By combining discovery, monitoring, and remediation, organizations can enforce responsible AI use without slowing innovation.

-

How can my organization evaluate the AI readiness of our data security models?

You can evaluate AI readiness by assessing whether your data security models can support real‑time AI activity monitoring. AI readiness requires visibility into how AI systems interact with sensitive data and whether your controls can adapt to fast‑moving exfiltration and access patterns.You can evaluate AI readiness by assessing whether your data security models can support real‑time AI activity monitoring. AI readiness requires visibility into how AI systems interact with sensitive data and whether your controls can adapt to fast‑moving exfiltration and access patterns.

A structured readiness assessment should include:

- AI activity monitoring capability, ensuring prompts, uploads, and responses can be inspected for exposure.

- Shadow AI data loss detection to determine how much AI data loss is occurring outside approved systems.

- User behavior analysis to uncover AI-driven insider risk patterns.

A clear view across these areas shows whether your environment can support secure AI data use.

-

How does the EU AI Act impact data security for AI?

The EU AI Act introduces new requirements for how organizations develop and use AI systems, especially those that handle sensitive or regulated data. It focuses on risk management, transparency, and control over how AI systems operate.The EU AI Act introduces new requirements for how organizations develop and use AI systems, especially those that handle sensitive or regulated data. It focuses on risk management, transparency, and control over how AI systems operate.

For organizations using generative AI, this increases the need to monitor how data is accessed, processed, and exposed across both approved and shadow AI tools.

The EU AI Act works alongside the General Data Protection Regulation (GDPR), which governs how personal data is protected. Together, these mandates make data security for AI a key part of both compliance and risk management, requiring organizations to:

- Maintain visibility into how AI systems access and use data

- Prevent exposure of sensitive or regulated data in prompts or outputs

- Keep audit records of AI activity and data processing

- Enforce data governance and data security controls across AI systems